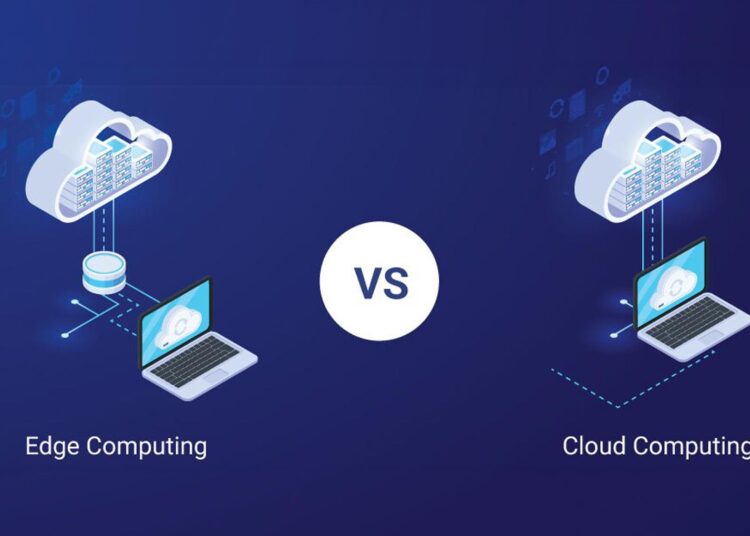

In today’s age and time of exponential growth in technology, it’s never more important to be aware of how data is being processed and where it is being processed. With companies across the globe still digitizing, the two models of computing—cloud computing and edge computing—are at the forefront. While both are doing a phenomenal job processing data, there exist some differences in their properties that are making them do so.

Let us look at what each model constitutes, the main differences between them, and why knowing them matters to your business or personal tech needs.

What is Cloud Computing?

Cloud computing refers to the delivery of computer resources such as storage, databases, networking, software, and processing over the internet. Instead of using local servers or individual computers, cloud infrastructure pools such resources into massive data centers owned and operated by cloud providers like AWS, Google Cloud, or Microsoft Azure.

Why Cloud Computing Matters:

- Scalability: The cloud allows users to scale up or down according to their needs.

- Accessibility: Applications and data can be accessed from any location that has an internet connection.

- Cost-Efficiency: As a pay-as-you-go service, cloud computing eliminates the need for gargantuan investments in infrastructure and hardware at the very beginning.

Cloud computing is being utilized heavily for applications such as hosting applications, storing data, and executing enterprise software. Cloud computing is not a solution without issues, however—particularly if real-time processing of data is the need.

What is Edge Computing?

Edge computing, in contrast, brings data processing closer to the point where data is created—be it local servers, edge nodes, or IoT devices. Instead of pushing everything to the cloud for processing, edge computing does it right there where it’s needed, resulting in faster response times and reduced network bandwidth usage.

Why Edge Computing Matters

- Low Latency: It is highly adapted to applications requiring real-time processing, such as autonomous vehicles, smart factories, and real-time health monitoring.

- Bandwidth Efficiency: Edge computing reduces the requirement for perpetual transmission of information to distant servers, thereby conserving bandwidth.

- Improved Reliability: With local processing, systems continue to be operational regardless of limited or no internet availability.

Edge computing is particularly useful in sectors where decisions must be made in a split second and latencies would be expensive.

Main differences between Cloud and Edge Computing

While both cloud and edge computing process data, they also differ in some very significant ways on where and how such data is processed.

Location of Processing

Cloud computing is merging all processing in large, distant data centers, and thus data has to travel over networks before it can be processed. Edge computing shifts this processing closer to the data source, i.e., on IoT devices or on nearby edge nodes.

Latency:

Cloud computing involves greater latency as data must undergo long distances prior to being processed in cloud data centers. This can result in slower response times. Edge computing processes data locally, resulting in low latency, hence perfectly ideal for real-time applications involving rapid decision-making.

Internet Dependency

Cloud computing relies heavily on a stable internet connection. Cloud services are greatly impacted without an internet connection. Edge computing can, however, operate even in limited or no internet connectivity since most of the processing is local, which enables systems to be operational even without a stable network.

Use Cases

Cloud computing is more relevant to use cases like web applications, enterprise IT, and big data analytics where the responses are not needed in real-time. Edge computing is more relevant to use cases like IoT devices, autonomous vehicles, augmented reality (AR), and industrial automation where the data needs to be processed in real-time and feedback must be instant.

Why It Matters

The choice between edge and cloud computing mostly depends on the specific needs of a business or application. As the digital realm continues to be increasingly interconnected, businesses are increasingly leveraging both models of computing to realize optimum efficiency, reduced latency, and innovation.

For example, in the healthcare sector, edge computing may be utilized for real-time monitoring of patients’ vital signs, while cloud computing can offer mass storage and data analysis. Similarly, in retail, edge devices may process customers’ activity in real-time, while cloud computing may deal with inventory, analytics, etc.

Last but not least, these two computing models are not opposing ones—they are complementary. In fact, hybrid models that achieve maximum benefit from both edge and cloud computing are in the process of being developed, giving companies the freedom to trade performance and affordability.

Since there are continuous advancements in technology, it is crucial for business firms with a stake in innovation and competitiveness to find out the purpose of edge computing and cloud computing. No matter what you hold dearest—real-time processing, scalability, or availability—it is important that you utilize a suitable computer model that possesses the ability to reshape performance as well as efficiency.

By rightly leveraging both these technologies, businesses can capitalize on the best of both worlds—preparing themselves for tomorrow’s computing.